With the rapid development of AI technology, the volume of data generated during large-model training and inference has experienced explosive growth. Currently, InfiniBand (IB) remains the preferred network for high-density training clusters. However, Ethernet offers greater advantages in public cloud architectures where node counts are extremely large and load balancing is critical. In the future, the massive demand for inference computing power will be fulfilled via public clouds (e.g., AWS, Alibaba Cloud). RoCE (RDMA over Converged Ethernet) , a technology built on Ethernet, has emerged as the preferred network architecture for inference and intelligent computing cloud scenarios, thanks to its compatibility with existing Ethernet infrastructure and cost advantages.

What Is a RoCE Network?

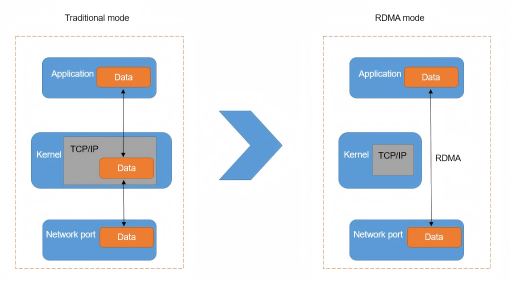

RoCE is a type of Remote Direct Memory Access (RDMA) technology based on Ethernet. By bypassing the traditional TCP/IP protocol stack, RDMA reduces the complexity and latency of data transmission. RoCE's core design is to implement RDMA functionality on the basis of lossless Ethernet, enabling traditional Ethernet to possess the low-latency and high-throughput characteristics required for high-performance computing. The technical logic behind it is to optimize the Ethernet protocol. While retaining Ethernet compatibility, it introduces the direct memory connection capability of RDMA, eliminating CPU involvement in data copying and thus breaking through the performance bottlenecks of traditional Ethernet.

Figure 1: Comparison of Data Transmission Modes—Traditional Ethernet relies on the TCP/IP protocol stack and CPU for data copying (high latency), while RDMA mode bypasses the stack and directly accesses memory (low latency), which is the core of RoCE's performance advantage.

Compared with traditional Ethernet, the key difference of RoCE lies in the balance between performance enhancement and compatibility retention. It not only provides low latency and high bandwidth close to those of InfiniBand but also can be directly adapted to existing Ethernet infrastructure without the need to rebuild the network environment. In practice, RoCE v2 achieves end-to-end latency of 1-5 microseconds, close to InfiniBand's 0.5-2 microseconds, while avoiding the latter's dedicated infrastructure costs.

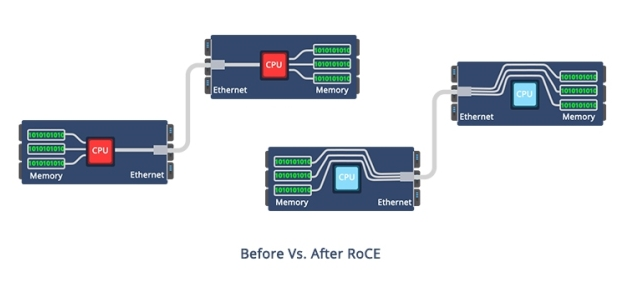

Figure 2: RoCE enables direct memory-to-memory Ethernet data transfer, bypassing CPU involvement compared to traditional Ethernet which relies on CPU for data handling.

RoCE has two main versions: RoCE v1 operates at the Ethernet link layer (relying on a single broadcast domain) and is suitable for small local clusters, while RoCE v2 runs at the IP layer (supporting routing across subnets), making it more adaptable to large-scale cloud and cross-cluster scenarios. This article focuses on RoCE v2, the mainstream choice for current intelligent computing.

Value and Application Scenarios of RoCE

The value of RoCE is concentrated in three dimensions: cost-effectiveness, ecological compatibility, and cloud scenario adaptability. The specific application scenarios are as follows:

AI Inference Scenarios: Inference tasks are less sensitive to latency than training tasks, but they require large-scale deployment while controlling costs. RoCE can ensure performance and reduce hardware investment at the same time.

Intelligent Computing Cloud Scenarios: Whether it is a public cloud or a private cloud, the mainstream architecture is based on Ethernet. RoCE can be directly integrated into the existing cloud network without the need to build a separate dedicated network, making it the optimal choice for intelligent computing services to migrate to the cloud.

HPC and High-Speed Storage Scenarios: High-performance computing (HPC) and distributed storage have high requirements for data transmission efficiency. RoCE can achieve RDMA-level performance at a lower cost and replace some applications of InfiniBand or Fibre Channel.

Technical Requirements of RoCE Network Implementation

The construction of a RoCE network must meet two indispensable conditions: hardware adaptation and architecture design. Specifically, it includes RoCE network adapters, RoCE switches that support lossless Ethernet, and an intelligent lossless network architecture.

RoCE Network Adapters

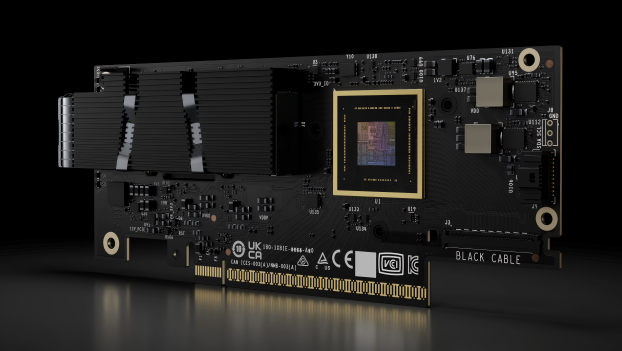

RoCE network adapters are the core interfaces for implementing RDMA functionality. They need to have hardware-level RDMA processing capabilities, which can free the CPU from data transmission tasks, reduce latency, and increase bandwidth. Currently, the NVIDIA ConnectX series network adapters stand out in the market for its optimal performance and compatibility. Among them, the latest ConnectX-8 network adapters have a bandwidth of up to 800Gb/s and support the PCIe Gen6 interface, which can meet the needs of ultra-large-scale inference clusters. It also supports SR-IOV virtualization (critical for multi-tenant cloud environments) and NVMe over Fabrics (accelerating distributed storage access), aligning with the integrated needs of AI inference and cloud computing.

Figure 3: NVIDIA ConnectX-8 SuperNIC Adapter Card

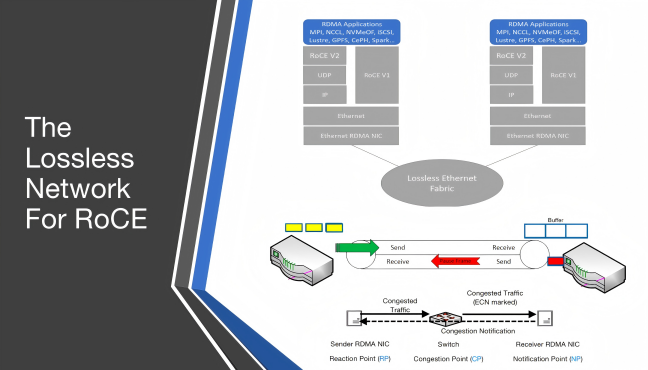

Ethernet Switches Supporting Lossless Ethernet

RoCE relies on lossless Ethernet to achieve low latency (avoiding retransmission caused by packet loss). Therefore, Ethernet switches must have the Priority Flow Control (PFC) function, which is the core technology for building a lossless network.

NVIDIA has laid out a full range of switches that support RoCE in the Ethernet field.

SN3000 Switches: With a bandwidth of 200Gb/s, it is suitable for small and medium-sized inference clusters.

SN4000 Switches: Each port supports 400Gb/s bandwidth, and the switch supports port aggregation, making it suitable for 1000-card-level inference clusters.

SN5000 Switches: With a bandwidth of 800Gb/s, it supports ultra-large-scale intelligent computing cloud scenarios. Moreover, it can realize multi-switch collaboration through the NVSwitch chip to improve cluster scalability.

Intelligent Lossless Ethernet Architecture

In addition to hardware, a RoCE network needs to realize the maximum performance and reliability through an intelligent lossless architecture. The core technologies include traffic control, congestion control, and storage network optimization.

Figure 5 : This diagram illustrates the architecture of a lossless Ethernet network for RoCE, showing protocol stacks of RoCE v1/v2 and the congestion notification mechanism to enable reliable RDMA over Ethernet.

Advantages of RoCE Network

The hardware adaptation (e.g., RoCE NICs, lossless switches) and intelligent architecture design outlined above lay the foundation for RoCE's core advantages over InfiniBand and traditional Ethernet. These advantages are concentrated in four key dimensions: ecosystem, cost, scalability, and cloud adaptability.

| Merit Dimension | Performance | Comparative Advantage (vs InfiniBand) |

|---|---|---|

| Ecosystem Openness | Based on the Ethernet ecosystem, supports multiple vendors (such as Huawei, H3C, etc.), no single-vendor lock-in, and the supply chain is stable with short response time. | The InfiniBand ecosystem is highly concentrated in NVIDIA, with few hardware options, limited supply chain regions. |

| Lower Cost | Can reuse existing Ethernet infrastructure (such as cables), no need for dedicated equipment; the overall procurement cost of Ethernet switches and network cards is 30%-50% lower than that of InfiniBand. | InfiniBand requires dedicated switches, network adapters, and cables, with high initial investment and higher maintenance costs. |

| High Scalability | Supports 10G-800G bandwidth, with future evolution to 1.6Tbps; the switching architecture of Spine-Leaf supports unlimited expansion. | InfiniBand has high single-port bandwidth, but the overall expansion cost of the switch fabric is high. |

| Cloud-Native Adaptability | Can be directly connected to public clouds (such as AWS), private clouds, no need to reconstruct the network, and supports fast business access. | InfiniBand accesses the cloud network through gateways (such as IB-to-Ethernet Gateway), increasing latency and complexity, and is not suitable for cloud-native scenarios. |

Comparison of RoCE and InfiniBand in Business Scenarios

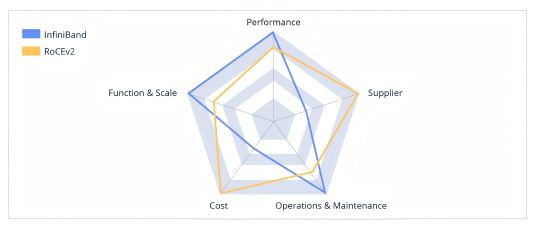

RoCE and InfiniBand are not substitutes for each other but complementary solutions for different business scenarios. There are significant differences between them in terms of business performance, scale, operation and maintenance, cost and supplying:

Figure 6:Comparison between InfiniBand and RoCEv2

Performance

In terms of performance, since InfiniBand has lower end-to-end latency than RoCE v2, networks built on InfiniBand hold an edge in application-layer performance. However, the performance of RoCE v2 still meets the requirements of most intelligent computing scenarios.

Business Scale

InfiniBand supports single-cluster GPU deployments of up to 10,000 cards with no degradation in overall performance. RoCE v2 networks can handle single-cluster scales of up to 1,000 GPUs without significant drops in overall network performance.

Operation and Maintenance

In terms of business operation and maintenance, InfiniBand is more mature than RoCE v2, encompassing capabilities like multi-tenant isolation and diagnostic tools for operations.

Cost

Regarding cost, InfiniBand is more expensive than RoCE v2. This is primarily due to the higher cost of InfiniBand switches and transceiver modules compared to Ethernet switches.

Suppliers

When it comes to suppliers, InfiniBand is predominantly supplied by NVIDIA. RoCE v2 has a broader range of suppliers, though NVIDIA remains a top performer in terms of product quality.

Conclusion

Leveraging the combined advantages of Ethernet compatibility, RDMA high performance, and low cost, RoCE networks have become a core network solution for scenarios such as AI inference, intelligent computing clouds, and HPC. It does not replace InfiniBand but serves as a more cost-effective alternative in scenarios where performance is sufficient. For enterprises pursuing cost control, needing cloud architecture integration, or deploying inference clusters, RoCE is the optimal solution. However, for ultra-large-scale training and latency-critical scenarios, InfiniBand remains irreplaceable. In the future, as RoCE technology continues to evolve and its ecosystem matures, its application scope will expand further, making it a key bridge between computing and cloud in data center networks.

English

English