We often encounter the abbreviations CPU, GPU, NPU, and TPU, but do you truly understand their differences and core characteristics? With the rapid advancement of artificial intelligence technology, these specialized processors are playing increasingly critical roles across various industries. This article will provide a detailed analysis of these processors' core concepts and application scenarios.

CPU

The CPU serves as the "brain" of computers, executing diverse computational tasks. It is the core component of all electronic devices. It excels at general-purpose arithmetic and logic operations, offering high flexibility and universality. Widely used in PCs, servers, smartphones, and other devices, it remains the most common general-purpose processor.

Key Features:

- Core Strength: Powerful logic operations and control capabilities, optimized for serial tasks.

- Flexibility: Adaptable to execute diverse program codes.

- Versatility: Suitable for an exceptionally broad range of applications.

- Major Manufacturers: Intel, AMD

GPU

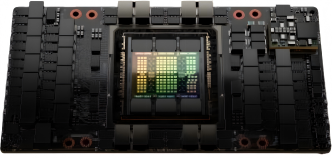

Originally designed for rapid graphics and image rendering, GPUs outperform CPUs in parallel processing power. This capability has made them indispensable in AI fields requiring massive parallel computations, such as deep learning.

Key Features:

- Core Strength: Exceptionally efficient at large-scale parallel computing.

- Architecture: Equipped with numerous CUDA cores to execute vast quantities of simple operations simultaneously.

- Applications: Excels in highly parallel tasks like image/video processing, scientific computing, and training deep learning/machine learning models.

- Major Manufacturers: NVIDIA, Huawei.

NPU

The NPU (Neural Processing Unit) is a processor specifically designed for AI applications, particularly neural network computations. It delivers exceptional energy efficiency when executing AI tasks, making it a core hardware component for future intelligent devices.

Key Features:

- Core Strength: Specialized in neural network operations.

- Hardware Architecture: Integrates numerous dedicated computing units with high-performance memory subsystems for rapid model data access, typically employing dedicated instruction sets for hardware-level neural network acceleration.

- Energy Efficiency: Low-power design, ideal for mobile and edge devices.

- Performance: Significantly outperforms general-purpose CPUs/GPUs in specific AI tasks.

- Major Manufacturers: Huawei, Cambricon, Qualcomm, Apple.

TPU

The TPU (Tensor Processing Unit), developed by Google, is a dedicated AI accelerator primarily designed to accelerate tensor operations in its TensorFlow deep learning framework. It is mainly deployed in Google Cloud to power its AI platforms and large-scale model training.

Key Features:

- Core Strength: Deeply optimized for TensorFlow and tensor operations, delivering exceptional performance in deep learning inference and training.

- Hardware Architecture: Features highly optimized tensor computing units with hardware-level acceleration for common neural network operators.

- Memory Efficiency: Designed with an efficient memory system to minimize access bottlenecks.

- Deployment: Primarily used in Google Cloud AI services and internal large-scale model training, with a low-power variant (Edge TPU) for edge applications.

- Key Manufacturers: Google

Core Characteristics Comparison

| Feature | Primary Role | Key Advantages | Parallel Capability | Typical Scenarios | Power Efficiency |

|---|---|---|---|---|---|

| CPU | General-purpose processor | Logic control, flexibility, versatility | Limited | OS, general applications, control logic | Moderate |

| GPU | Parallel computing accelerator | Massively parallel computing | Exceptional | Graphics rendering, scientific computing, DL training | High power consumption |

| NPU | Dedicated AI processor (neural networks) | High-efficiency neural net computing, ultra-low power | Strong | On-device AI (phones, IoT, autonomous driving), edge inference | Ultra-low power |

| TPU | Dedicated AI accelerator (TensorFlow/tensor-optimized) | Extreme-performance tensor ops | Exceptional | Cloud AI services, large-scale training | Cloud: Performance-to-power ratio; Edge: Low power consumption |

Conclusion

The CPU remains the cornerstone of general computing, excelling in logic control but limited in parallel efficiency. GPUs, with their massively parallel architecture, dominate deep learning training and graphics processing, particularly in matrix operations. NPUs are purpose-built for on-device AI, delivering ultra-low power consumption and real-time inference through hardware-level neural network acceleration, making them essential for smart devices and edge computing. TPUs, optimized for TensorFlow and large-scale cloud tasks, offer unparalleled tensor computing performance within Google's ecosystem.

These four processor types complement each other in versatility, parallel capability, energy efficiency, and application scenarios, collectively driving the evolution of specialized computing architectures.

English

English