As computing systems shift toward liquid cooling, an often-overlooked component, the optical module, is becoming a key focus. In highly integrated environments like NVIDIA's GB200/GB300, high-speed optical modules are not only vital for data transmission but also major heat sources. Integrating them into the system's liquid-cooling loop has evolved from an optimization to a technical necessity.

Figure 1: Data center liquid cooling system

1.Optical Modules: Emerging Heat Hubs

As data center networks advance to 800G and 1.6T speeds, optical module power consumption has surged, exceeding 15W for 800G transceivers and projected to surpass 30W for 1.6T optical modules. On dense Blackwell switches hosting dozens of such ports, this creates a "thermal wall" with total power reaching several kilowatts. Without effective cooling, the surface temperature of optical module panels could exceed 85°C, far above safe limits, directly threatening system stability.

2.The "Hotspot" Problem in Liquid-Cooled Systems

In a liquid-cooled chassis, airflow is minimal. Traditional finned modules designed for air cooling lose their heat dissipation path, trapping heat locally. This leads to thermal hotspots, degrading DSP and laser performance, increasing BER, and accelerating aging. Thus, integrating optical modules into the liquid loop is no longer optional — it's essential.

3.Integration Becomes Inevitable

In Blackwell-class cabinets with sealed liquid environments, air-cooled modules effectively sit inside an "insulated box," inevitably leading to overheating. The industry's rapid development of OSFP-RHS (Flat-Top optical module) and immersion-compatible modules is a direct response to this challenge.

4.Core Cooling Technologies: Cold Plate vs. Immersion

To integrate optical modules into system-level cooling, two main technologies have emerged: Cold Plate Cooling and Immersion Cooling. Cold plate designs (OSFP-RHS based) are easier to service and retrofit, while immersion offers superior thermal performance at higher cost and complexity. Both will coexist for years to come.

4.1 Cold Plate Cooling

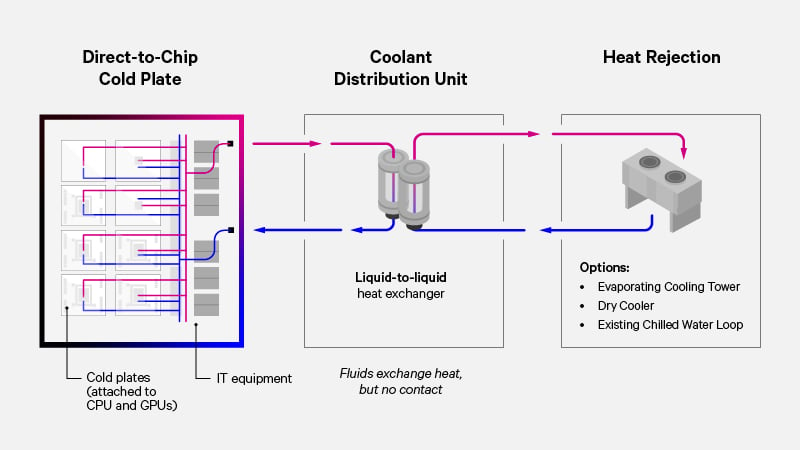

Principle: A metal cold plate with internal coolant channels is built into the chassis. The flat-top optical module (OSFP-RHS) contacts the cold plate via thermal interface material (TIM). Heat from the module is transferred through conduction to the cold plate, then carried away by the circulating coolant—similar to a "heat-dissipating patch" that directly draws heat from the module.

Figure 2: Schematic of Cold Plate Cooling Working Principle. This solution achieves rapid heat transfer through direct contact between the cold plate and the optical module.

Key Enabler — OSFP-RHS (Flat Top) Packaging: Defined by the OSFP MSA group in 2024, the OSFP-RHS flat-top design ensures maximum contact area between the optical module and the cold plate, enabling optimal thermal conductivity. Compared to traditional finned modules, it offers up to 40% better cooling efficiency.

Advantages:

- Maintains hot-pluggable serviceability, enabling on-site maintenance without system shutdown.

- Compatible with existing data center infrastructure, requiring only minor retrofits.

- Lower cost and easier deployment compared to immersion cooling.

- Only the top surface of the module is fully cooled; side or bottom heat sources may not be addressed.

- Heat transfer efficiency depends on the quality of TIM application and contact pressure—poor alignment can lead to cooling gaps.

4.2 Immersion Cooling

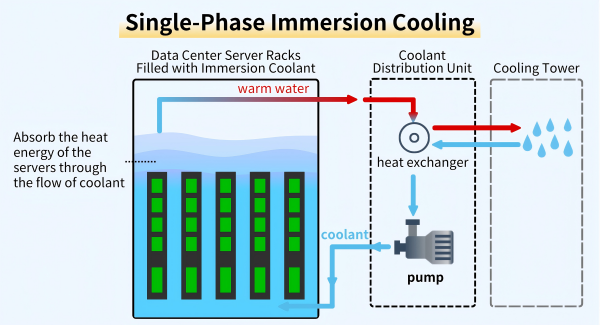

Principle: Entire servers, including optical modules, are submerged in a dielectric coolant (a fluid that is non-conductive and non-corrosive to electronic components). Heat from the optical modules and other components is transferred directly to the coolant, eliminating localized hotspots.

Types:

- Single-phase: Uses high-boiling-point dielectric fluids (e.g., mineral oil, synthetic esters) circulated by pumps. Heat is dissipated through fluid convection without phase change.

Figure 3: Schematic of Single-Phase Immersion Cooling Working Principle. Coolant circulates to absorb heat from servers and transfers it to the cooling tower.

-

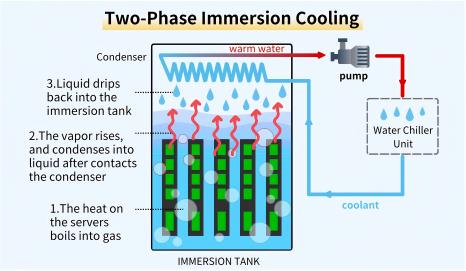

Two-phase: Uses low-boiling-point refrigerants (e.g., fluorinated compounds). When in contact with hot components, the fluid vaporizes (absorbing large amounts of heat via latent heat), then rises to the condenser, condenses back into liquid, and drips back into the tank—achieving the highest cooling efficiency.

Figure 4: Schematic of Two-Phase Immersion Cooling Working Principle. The phase change of the coolant enables efficient heat absorption and dissipation.

Figure 4: Schematic of Two-Phase Immersion Cooling Working Principle. The phase change of the coolant enables efficient heat absorption and dissipation.Design Challenges:

- Requires hermetic sealing of the chassis to prevent coolant leakage and ingress of external contaminants.

- Module materials must be resistant to chemical degradation by the coolant (e.g., avoiding rubber aging in oil-based fluids).

- High-speed optical connectors need to be re-engineered for liquid environments to maintain signal integrity.

- Unmatched cooling efficiency, eliminating hotspots entirely.

- Cuts cooling-related energy consumption by up to 95%, according to a 2024 report by GreenIT (aligning with Green Data Center initiatives).

- High upfront and operational cost.

- Complex maintenance — components must be removed from liquid, cleaned of residues, and dried before servicing.

5.Technology Comparison: Cold Plate vs Single-Phase vs Two-Phase

The choice between cold plate and immersion cooling is not only a decision on technical routes but also reflects data center operators' considerations regarding service efficiency, risk appetite, and long-term investment strategies. Cold plate cooling is an evolutionary and more robust path, while immersion cooling is a revolutionary exploration for ultimate efficiency. The specific differences are shown in the table below:

| Comparison Parameter | Cold Plate Cooling (with OSFP-RHS) | Single-Phase Immersion Cooling | Two-Phase Immersion Cooling |

|---|---|---|---|

| Heat Dissipation Principle | Conduction (module-cold plate) + Local Convection | Full Immersion, Liquid Convection (No Phase Change) | Full Immersion, Liquid Boiling Phase Change (Using Latent Heat of Vaporization) |

| Heat Dissipation Efficiency | High | Very High | Highest |

| Module Packaging Requirement | OSFP-RHS (Flat Top, No Sealing Needed) | Fully Sealed, Materials Must Be Compatible with Dielectric Oil | Fully Sealed, Materials Must Be Compatible with Low-Boiling-Point Fluorinated Compounds |

| Serviceability (MTTR) | Excellent (Hot-Swappable, No Shutdown Required) | Poor (Need to Remove Equipment and Clean Liquid Residues) | Very Poor (Need to Handle Vapor and Dry Equipment) |

| Impact on Infrastructure | Medium (Requires In-Chassis Cold Plate + CDU) | High (Requires Specialized Liquid Tanks + Liquid Circulation System) | Very High (Requires Sealed Liquid Tanks + Condensing System) |

| Type of Cooling Liquid | Water-Glycol Mixture | Dielectric Oil/Synthetic Ester | Low-Boiling-Point Fluorinated Compound |

| Core Advantage | Convenient Maintenance, Compatible with Existing Facilities | Uniform Heat Dissipation, Relatively Low Cooling Liquid Cost | Highest Heat Dissipation Efficiency, No Circulation Pump Required |

| Core Challenge | Relies on TIM Quality and Contact Pressure, Incomplete Cooling | Poor Serviceability, Complex Liquid Handling | Expensive Cooling Liquid, High System Complexity |

| Target Application | Mainstream Enterprise/Cloud Data Centers (Need to Modify Existing Facilities) | High-Density AI Clusters (High Heat Dissipation Requirements, Acceptable Maintenance Complexity) | Top-Tier Supercomputers (e.g., Computing Centers, Research Supercomputers; Pursuit of Ultimate Energy Efficiency, Low Maintenance Frequency) |

- Cooling Efficiency: Two-Phase Immersion > Single-Phase Immersion > Cold Plate

- Serviceability (MTTR): Cold Plate > Single-Phase Immersion > Two-Phase Immersion

- Cost & Complexity: Two-Phase Immersion > Single-Phase Immersion > Cold Plate

In practice, cold plate solutions suit retrofits and operations prioritizing low MTTR (Mean Time to Repair), while immersion appeals to hyperscale "greenfield" AI data centers seeking maximum density and efficiency. Both will evolve in parallel with cold plates dominating mainstream markets, immersion leading in ultra-dense AI/HPC deployments.

6.Strategic Outlook (Industry Trends)

The rise of NVIDIA Blackwell marks a decisive shift in the industry: liquid-cooled optical modules are now central to system thermal design. Key trends include:

- End of the air-cooling era: Liquid cooling becomes the foundation for all future AI/HPC systems.

- Optical modules as primary heat sources: Designers must incorporate optical module cooling into early system architecture planning (e.g., reserving cold plate slots or immersion tank space), rather than treating it as a post-design optimization.

- Dual-path evolution: Cold plate (OSFP-RHS) and immersion cooling will coexist.

- Toward deep integration: Co-Packaged Optics (CPO) merges optics and switch ASICs, cutting interconnect power 30-50%. Liquid cooling will be key to its adoption.

7.Conclusion

The thermal revolution sparked by NVIDIA's Blackwell architecture is reshaping digital infrastructure. Liquid-cooled optical modules are at the heart of this transformation. Their integration directly determines the stability, efficiency, and scalability of next-generation computing systems. Those who master this integration will lead in the exascale computing era.

English

English