Although data center operators typically upgrade their network architectures periodically, many data centers approach capacity limits, they urgently need more efficient solutions to support higher transmission rates and lower energy consumption.

In data centers, network topology design is typically determined by the port speed and quantity of servers. As the foundation of the entire data center network, the network interface cards (NICs) on servers must scale accordingly with expanding data center scale and bandwidth demands, enhancing their capacity to support higher transmission rates and more efficient data flow.

I.800G High-Speed Optical Solution

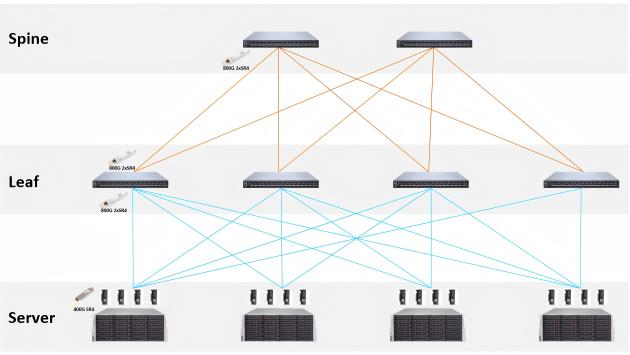

1.1 Newly Built 800G Data Center Network

For newly constructed 800G data centers, servers are typically newly procured, widely equipped with NVIDIA ConnectX-7 400G network adapters. At the optical interconnection level, 400G SR4 optical modules are deployed on the server side, while 800G 2xSR4 optical modules are used on the switch side. These modules support branch connections, simultaneously interfacing with two independent 400G SR4 optical modules, significantly enhancing port utilization and connection density.

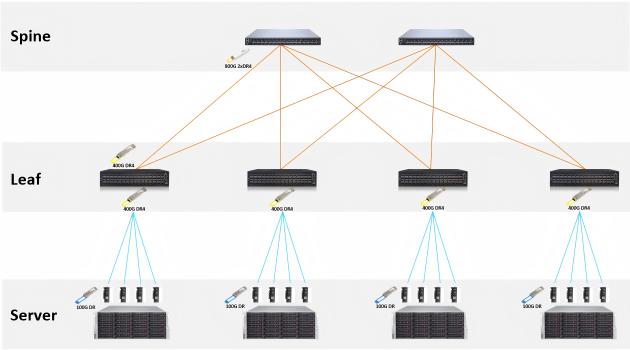

1.2 Smooth Upgrade from 400G to 800G Using DR4 and 2xDR4

For existing data centers built on 400G infrastructure, the key challenge for operators is achieving an 800G transition while minimizing equipment replacement costs. In such environments, many servers still utilize 100G network adapters, equipped with 100G DR optical modules.

- Leaf Switch Layer: Deploy 400G DR4 optical modules, combined with MPO-LC Breakout cables, split a single 400G DR4 port into four independent 100G links, each connecting to a server's 100G optical module.

- Spine Switch Layer: Introduce 800G 2xDR4 optical modules, where each module connects to two 400G DR4 modules, forming a "dual-aggregation" backbone link for higher throughput.

This approach enables a cost-effective upgrade path, leveraging existing 100G infrastructure while progressively scaling to 800G capacity.

II. 800G Optical Module Connection Solutions

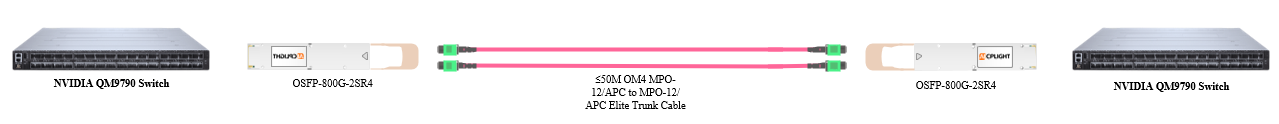

2.1 800G to 800G Direct Connection Solution

This solution employs a single 800G direct link, commonly used for interconnecting leaf and spine switches in high-performance computing (HPC) networks. It is suitable for scenarios where both ends support 800G ports.

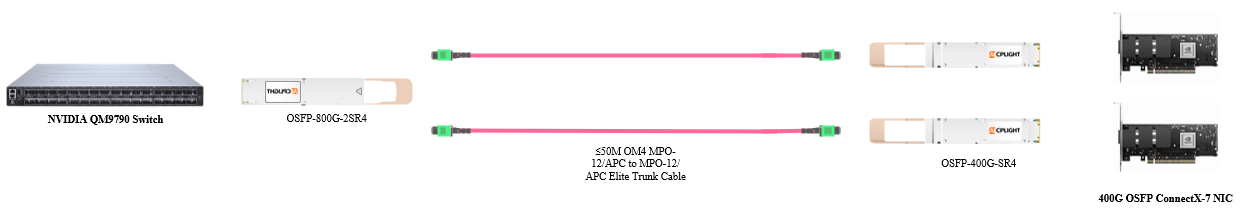

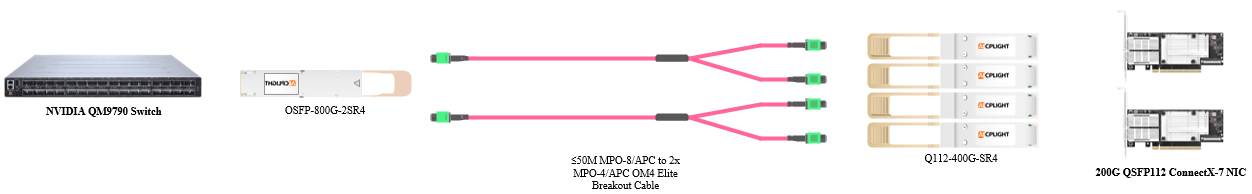

2.2 800G-to-2x400G Breakout Connection Solution

Leveraging the dual MPO-12 optical interface of 800G modules, this solution forms an "800G-to-2×400G" structure. It is typically deployed in environments where servers are equipped with NVIDIA ConnectX-7 400G NICs, with each server connecting to a leaf switch via a 400G uplink.

2.3 800G-to-4x200G Breakout Connection Solution

Building upon the 800G-to-2x400G approach, this solution further segments the connection using MPO breakout cables to achieve an "800G-to-4×200G" structure. It is ideal for server clusters equipped with dual-port 200G ConnectX-7 NICs.

III. Advantages of 800G OSFP Optical Modules in Data Centers

3.1 High Speed

800G modules primarily employ PAM4 modulation, combining eight 100 Gbps channels to achieve an aggregate rate of 800G. Measured bandwidth reaches up to 794 Gbps.

3.2 Low Latency

Equipped with high-performance Broadcom VCSEL laser arrays ensure signal integrity while significantly reducing transmission latency.

3.3 Low Bit Error Rate (BER)

Advanced Broadcom 7nm DSP chips enhance PAM4 signal processing efficiency, maintaining a pre-FEC BER between 1E-9 and 1E-11 for zero-error transmission post-FEC.

3.4 High Stability

Rigorous stress and reliability testing—including prolonged high-frequency operation and extreme environmental simulations (temperature variations, power cycling, voltage fluctuations)—ensures long-term stable performance in real-world deployments.

3.5 High-Density Connectivity Design

The dual MPO-12 optical interface design supports both 800G-to-800G direct connections and 800G-to-2x400G breakout configurations, simplifying data center cabling and network architecture.

3.6 Efficient Thermal Management

The finned-top enclosure increases heat dissipation area, leveraging cold-aisle airflow to rapidly dissipate heat and maintain stable operating temperatures.

IV. Conclusion

With AI, HPC, and future workloads demanding extreme bandwidth and ultra-low latency, 800G is no longer optional but an inevitable choice for data center network upgrades.

As an expert deeply rooted in high-speed optical interconnect, AICPLIGHT not only masters core 800G OSFP technology but also delivers end-to-end capabilities—from high-performance switches and HCAs to optical modules and cables. We are committed to helping operators build scalable, high-bandwidth, energy-efficient networks, ensuring a smooth and cost-effective transition to 800G. Our solutions empower your infrastructure to handle the next-generation data deluge and seize the opportunities of the AI era.

English

English