As AI models grow larger and GPU clusters scale to unprecedented sizes, data center networks are becoming a primary performance bottleneck. Traditional 400G architectures are increasingly strained by massive east-west traffic, ultra-low latency requirements, and rising power density. 800G optical modules have emerged as the next-generation interconnect foundation, enabling higher bandwidth density, flatter network topologies, and more efficient AI-scale infrastructure across hyperscale data centers, GPU fabrics, and data center interconnect (DCI) networks.

What Is an 800G Optical Module?

An 800G optical module is a pluggable transceiver capable of transmitting and receiving data at an aggregate bandwidth of 800 gigabits per second. These modules typically operate using PAM4 modulation, advanced digital signal processing (DSP), and high-speed electrical interfaces based on 112G SerDes technology.

Figure 1: AICPLIGHT 800GBASE 2xSR4/SR8 OSFP Optical Transceiver Module

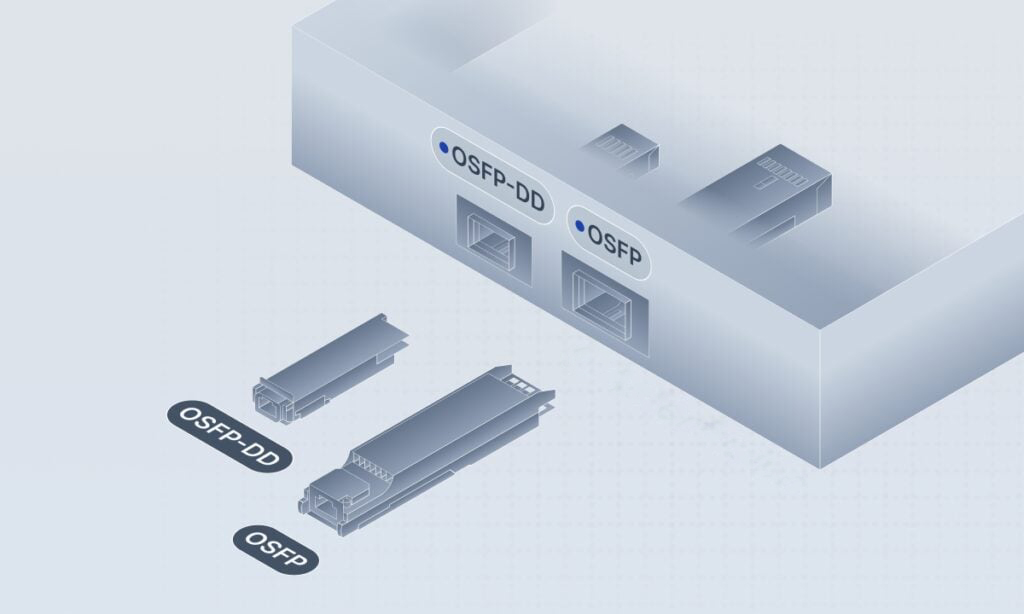

Today, 800G optical modules are primarily available in two form factors:

Both are designed to support the electrical, thermal, and mechanical demands of 800G operation while remaining compatible with modern switch ASICs.

The Technical Architecture Behind 800G Optical Modules

112G SerDes: The Electrical Foundation of 800G

At the electrical interface layer, 800G optical modules are built on 112G PAM4 SerDes technology. Compared with the 50G SerDes used in 400G designs, 112G signaling dramatically increases bandwidth per lane while placing far stricter demands on signal integrity.

Most 800G modules adopt:

This lane-efficient architecture enables higher front-panel port density and more effective utilization of next-generation switch ASICs.

PAM4 Modulation and DSP: Balancing Speed and Reliability

To reach 800G without excessive baud rates, PAM4 modulation is mandatory. By encoding two bits per symbol, PAM4 doubles data throughput compared to NRZ signaling—but at the cost of reduced noise margins.

To maintain link reliability, modern 800G optical modules integrate advanced DSP (Digital Signal Processing) chips, responsible for:

-

Adaptive signal equalization

-

Clock and data recovery (CDR)

-

Forward Error Correction (RS-FEC / KP4 FEC)

-

Real-time link monitoring and optimization

With DSPs fabricated on 7nm and 5nm process nodes, 800G modules strike a balance between performance, power consumption, and thermal stability.

OSFP vs QSFP-DD800: Choosing the Right 800G Form Factor

OSFP: Optimized for Performance and Thermal Headroom

OSFP was designed specifically for very high-speed optical interfaces. Its larger mechanical footprint allows for:

-

Higher module power budgets (often 14–16W and beyond)

-

Superior airflow and heat sink design

-

A clear upgrade path toward 1.6T optics

As a result, OSFP is widely adopted in AI spine switches, GPU fabrics, and InfiniBand networks, where sustained performance is critical.

QSFP-DD800: Density and Backward Compatibility

QSFP-DD800 builds upon the established QSFP-DD ecosystem introduced at 400G. Its key strengths include:

-

Backward compatibility with 400G QSFP-DD ports

-

Higher front-panel port density

-

Smoother migration from 400G to 800G

QSFP-DD800 is often preferred in environments where upgrade continuity and density are prioritized over maximum thermal headroom.

Figure 2: OSFP vs QSFP-DD Form Factor

Power and Thermal Challenges at 800G

Power consumption is one of the most critical constraints in 800G deployments. Early-generation 800G optical modules typically consume 13–18W, depending on reach and optical architecture.

Major contributors to power usage include:

-

High-speed DSP processing

-

Laser sources (EML or DFB)

-

Thermal stabilization components (TEC)

To mitigate these challenges, the industry is advancing:

-

Silicon photonics integration

-

Improved thermal materials and airflow design

-

Linear Pluggable Optics (LPO), which reduce or eliminate onboard DSPs

In large-scale AI data centers, power efficiency per bit has become a decisive factor in 800G network design.

Why AI Data Centers Are Driving 800G Adoption

East-West Traffic Becomes the Dominant Workload

AI training and inference workloads generate massive east-west traffic, as GPUs continuously exchange gradients and parameters. These communication patterns demand:

-

Extremely high bandwidth

-

Ultra-low latency

-

Predictable tail latency

By enabling higher link capacity and flatter network architectures, 800G optical modules directly address these AI-specific networking challenges.

Measurable Gains at the Cluster Level

In large GPU clusters, migrating from 400G to 800G networking can:

-

Reduce network oversubscription

-

Shorten AI model training cycles

-

Improve overall GPU utilization

Industry benchmarks consistently show that 800G-based fabrics deliver significantly higher training efficiency than comparable 400G deployments, especially for large-scale AI models.

Deployment Scenarios for 800G Optical Modules

Spine Layer in Leaf-Spine Architectures

One of the most common uses of 800G optics is in the spine layer of AI-oriented leaf-spine networks. Higher spine bandwidth allows operators to:

-

Scale cluster size without increasing switch count

-

Reduce cabling complexity

-

Improve resilience and fault tolerance

GPU Fabrics and InfiniBand Networks

800G optical modules are increasingly deployed in InfiniBand-based GPU fabrics, where deterministic latency and throughput are essential. These environments benefit from:

Both OSFP and QSFP-DD800 are widely supported in modern InfiniBand platforms.

Data Center Interconnect (DCI)

For campus and metro-scale interconnects, 800G DR8 and FR4 modules enable high-capacity links between data centers. Compared with multiple parallel 400G links, 800G significantly reduces:

-

Fiber count

-

Transceiver quantity

-

Operational complexity

800G Optical Module Types and Reach Options

To support diverse deployment needs, 800G optical modules are available in multiple variants:

-

800G SR8: Multimode fiber, short reach, ideal for high-density intra-data-center links

-

800G DR8: Single-mode fiber, up to ~500 meters, well suited for leaf-spine connections

-

800G FR4: CWDM-based design, up to 2 km, commonly used for aggregation and DCI

Selecting the appropriate reach option is essential to balancing cost, power consumption, and network scalability.

Migration from 400G to 800G: A Practical Perspective

Despite rapid innovation, 400G remains a critical part of current data center networks. Most operators are adopting hybrid architectures where:

-

800G is introduced at the spine or core layer

-

400G continues to serve access, storage, or edge networks

This phased strategy protects existing investments while enabling a controlled transition to higher-speed infrastructure.

The Future of 800G and Beyond

Although 1.6T optical modules are already under development, 800G will remain a mainstream technology for the next 3–5 years. Key trends shaping its evolution include:

-

Broader adoption of LPO architectures

-

Continued improvements in silicon photonics yields

-

Closer integration with next-generation switch ASICs

The experience gained from deploying 800G networks today will directly influence the success of future 1.6T and CPO-based designs.

Conclusion

800G optical modules are not merely a bandwidth upgrade—they are a strategic enabler for AI-scale computing. By combining 112G SerDes, PAM4 modulation, advanced DSP, and optimized form factors, 800G optics deliver the performance, density, and efficiency required by modern data centers. For AI training clusters, hyperscale cloud environments, and high-capacity interconnect networks, 800G represents the foundation of next-generation digital infrastructure, enabling scalable growth while keeping power and complexity under control.

English

English