Today, two networking technologies dominate AI and high-performance computing (HPC) environments: InfiniBand and Ethernet enhanced with RoCEv2 (RDMA over Converged Ethernet v2). While most discussions focus on switches, NICs, and protocols, the optical modules connecting these systems are equally essential.

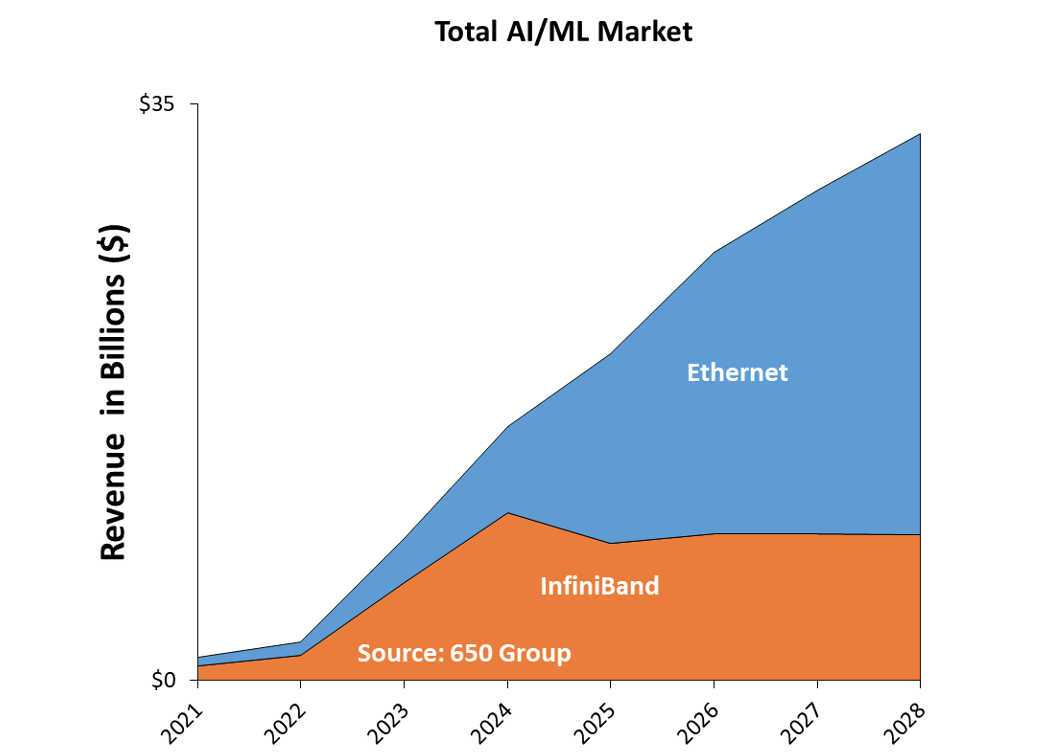

Figure 1: InfiniBand vs. Ethernet Market Size Forecast (Source: 650 Group)

High-speed optical transceivers directly affect bandwidth density, latency behavior, power consumption, and long-term scalability. This article compares InfiniBand and Ethernet from an optical connectivity perspective, with a focus on how to select the right 400G and 800G optical modules for AI networks.

Why Optical Modules Matter in AI Networks

In traditional enterprise networks, optical modules are often treated as passive components. In AI data centers, this assumption no longer holds.

Large-scale GPU clusters generate massive east–west traffic, with GPUs constantly exchanging gradients, parameters, and synchronization data. Even minor inefficiencies at the optical layer—such as added latency, signal instability, or excessive power consumption—can compound across thousands of nodes and significantly impact training time.

In AI networks, optical modules directly influence:

-

End-to-end latency and latency jitter

-

Packet loss and congestion behavior

-

Thermal balance inside high-density switches

-

Scalability from hundreds to thousands of GPUs

As a result, optical module selection becomes a first-order design decision rather than a secondary procurement task.

InfiniBand vs. Ethernet in AI Clusters: An Optical Perspective

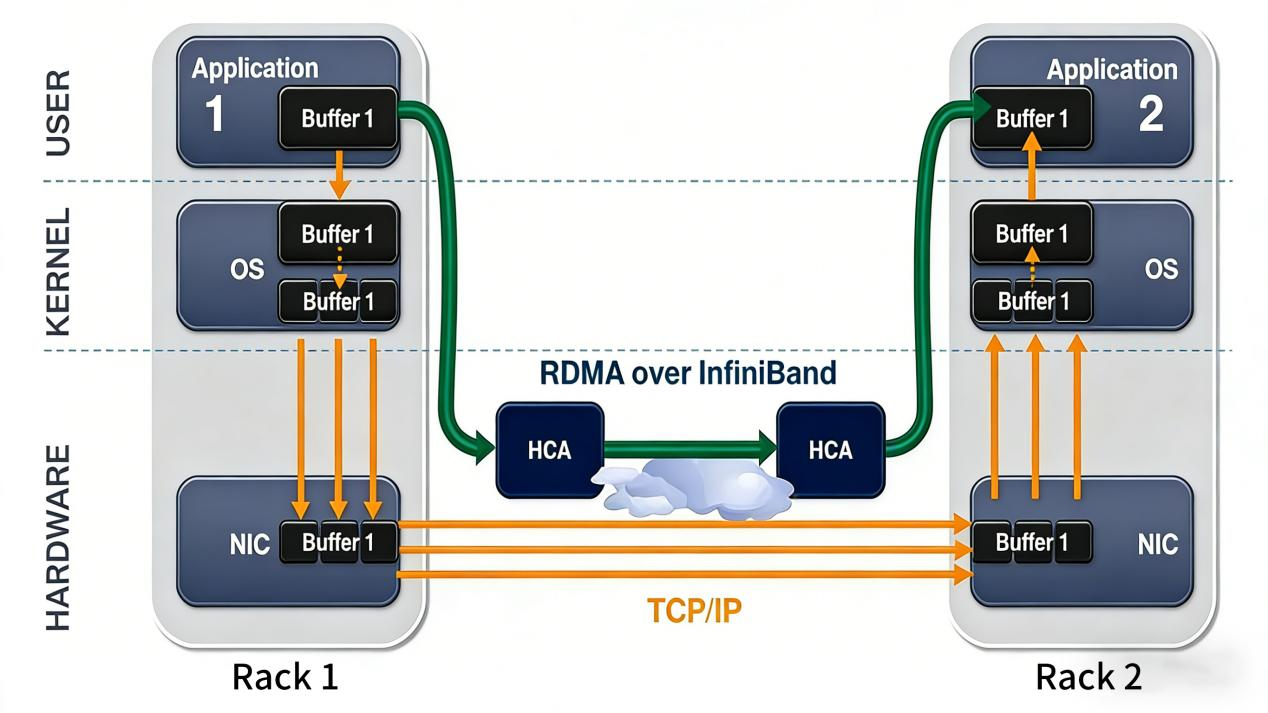

InfiniBand has long been the preferred interconnect for HPC and tightly coupled AI workloads. It provides native RDMA, deterministic latency, and mature congestion control mechanisms. These characteristics make InfiniBand well suited for large GPU clusters where predictable performance is critical.

Figure 2: How RDMA over InfiniBand enables direct application-to-application data transfer by bypassing the kernel, compared with the traditional TCP/IP data path across racks.

Ethernet, meanwhile, has evolved rapidly. With the introduction of RoCEv2, Ethernet now supports RDMA while maintaining its open ecosystem and cost advantages. As a result, RoCEv2-based Ethernet has become the dominant choice for hyperscalers and many enterprise AI deployments.

From a physical layer perspective, however, the two technologies are converging. Both InfiniBand and Ethernet rely on the same high-speed optical module standards, including QSFP-DD and OSFP form factors, as well as similar modulation and signaling technologies.

The key differences lie not in the optical interface itself, but in how optical module characteristics align with protocol behavior and deployment philosophy.

Bandwidth Scaling and Optical Module Requirements

AI workloads place extreme demands on network bandwidth. To keep pace, optical interconnect speeds have increased rapidly:

-

400G optical modules are now mainstream in AI training clusters

-

800G optical modules are being deployed for next-generation AI fabrics

-

1.6T optical modules are already appearing on industry roadmaps

Commonly deployed optical transceivers include:

-

400G QSFP-DD DR4 optical modules for short-reach, low-latency links

-

400G FR4 optical modules for longer reach with reduced fiber count

-

800G OSFP DR8 optical modules for ultra-high-density AI switches

For example, a 400G DR4 optical module (500 m, single-mode) is widely used in both InfiniBand NDR and 400G Ethernet AI networks. It offers an optimal balance of bandwidth density, power efficiency, and latency—making it a preferred choice for ToR-to-spine connectivity.

Latency Sensitivity and Optical Module Design

Latency is one of the most critical metrics in AI training environments. Even small increases in latency can reduce synchronization efficiency during distributed training, especially as cluster size grows.

InfiniBand deployments often prioritize short-reach DR optical modules, such as 400G DR4 or

800G DR8, because they minimize digital signal processing (DSP) complexity. Reduced DSP overhead leads to lower latency and more deterministic performance.

RoCEv2 Ethernet networks, while slightly more tolerant, still benefit significantly from low-latency optical transceivers. Selecting optical modules optimized for AI workloads—rather than generic long-reach modules—helps maintain stable lossless Ethernet behavior and reduces the risk of congestion-related performance degradation.

Reach, Topology, and Cabling Considerations

AI data center topologies, including leaf–spine and dragonfly architectures, have a direct impact on optical module selection.

| Reach |

Typical Optical Module |

Common Use Case |

| ≤2 m |

DAC |

In-rack GPU connections |

| ≤30 m |

AOC |

Short inter-rack links |

| 500 m |

400G / 800G DR |

ToR to Spine |

| 2 km |

400G FR4 / 800G 2×FR4 |

Spine to Core |

In large-scale AI fabrics, single-mode optical modules dominate due to their scalability and future-proofing advantages. Over-specifying reach—such as using FR modules where DR is sufficient—often increases cost and power consumption without delivering measurable performance benefits.

Power Consumption, Thermal Design, and Form Factors

High-speed AI switches and GPUs operate close to thermal limits. Optical module power consumption therefore becomes a key constraint.

Typical power ranges include:

InfiniBand deployments often favor OSFP optical modules because of their superior thermal characteristics and mechanical design. Ethernet deployments may use a mix of QSFP-DD and OSFP, depending on switch architecture and port density requirements.

Thermally optimized optical modules help maintain stable operation in dense AI environments and reduce the risk of performance throttling.

Ecosystem, Compatibility, and Total Cost of Ownership

Ethernet benefits from a broad, multi-vendor ecosystem that supports flexible sourcing of third-party compatible optical modules. This openness can significantly reduce total cost of ownership (TCO) in large AI deployments.

InfiniBand environments, by contrast, are often more tightly integrated. Optical modules must undergo strict compatibility validation with switches and NICs to ensure stable operation. In both ecosystems, accurate DOM/DDM monitoring and proven interoperability are essential for maintaining long-term reliability.

Practical Optical Module Selection Guidelines for AI Networks

When designing AI networks, several practical guidelines can help streamline optical module selection:

-

Use DR optical modules whenever reach requirements allow to minimize latency and power consumption

-

Reserve FR optical modules for spine or core layers where longer reach is truly required

-

Match OSFP or QSFP-DD form factors to switch thermal design and port density

-

Prioritize optical modules validated for AI and RDMA workloads, not just generic data traffic

Following these principles helps ensure that the optical layer supports—not limits—AI network performance.

Conclusion

InfiniBand and Ethernet differ significantly at the protocol level, but their physical-layer requirements increasingly converge. High-performance AI networks depend on 400G and 800G optical modules that deliver low latency, high reliability, and efficient power usage.

As AI clusters continue to scale, optical module selection becomes a strategic design decision, directly shaping performance, scalability, and operational stability. In modern AI data centers, optical modules are no longer passive components—they are core enablers of network efficiency.

English

English