Introduction

Cloud computing, big data, and AI computing services have experienced explosive growth in recent years, driving ever-increasing demands for bandwidth throughput, elastic scalability, and low-latency interaction in data center networks. The traditional three-layer network architecture has become inadequate for large-scale distributed traffic scenarios. The Leaf-Spine architecture, derived from the Clos network model, has emerged as the core networking solution for modern data centers due to its flattened topology design. This article provides a detailed introduction to this network architecture.

1. Fundamentals of Leaf-Spine Architecture

1.1 What is Leaf-Spine Architecture?

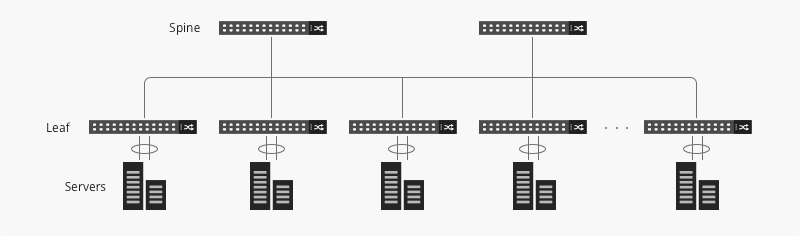

The Leaf-Spine architecture is a flattened two-layer network topology composed of Leaf access switches and Spine core switches, designed to meet the high-throughput and low-latency requirements of data centers.

Leaf switches directly connect to terminal devices such as servers and storage systems, serving as access and traffic aggregation points. Their port count and speed directly determine the architecture's port density. Spine switches, on the other hand, do not connect to terminals but instead interconnect all Leaf switches to facilitate cross-Leaf traffic forwarding.

This architecture eliminates the traditional Layer 3 aggregation layer design, adopting a full-mesh interconnection between Leaf and Spine switches to remove traffic forwarding bottlenecks while simplifying network configuration and maintenance. In practical deployments, port density planning is a critical factor in Leaf switch selection, directly impacting terminal access scale and service capacity.

1.2 CLOS Network Model

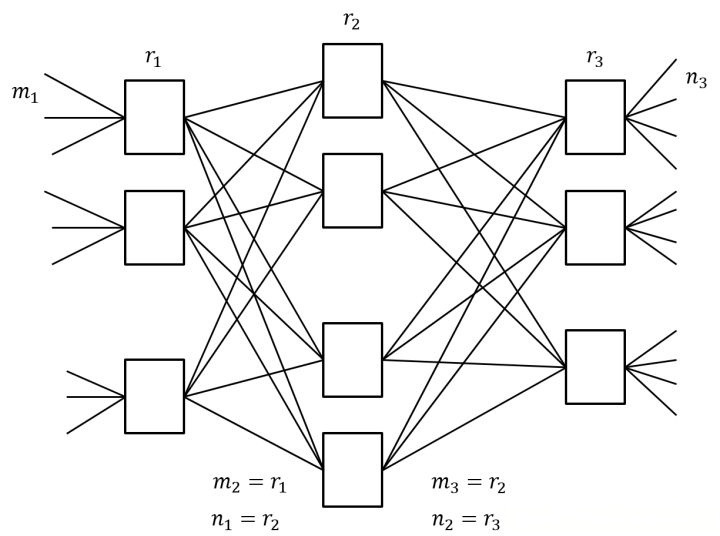

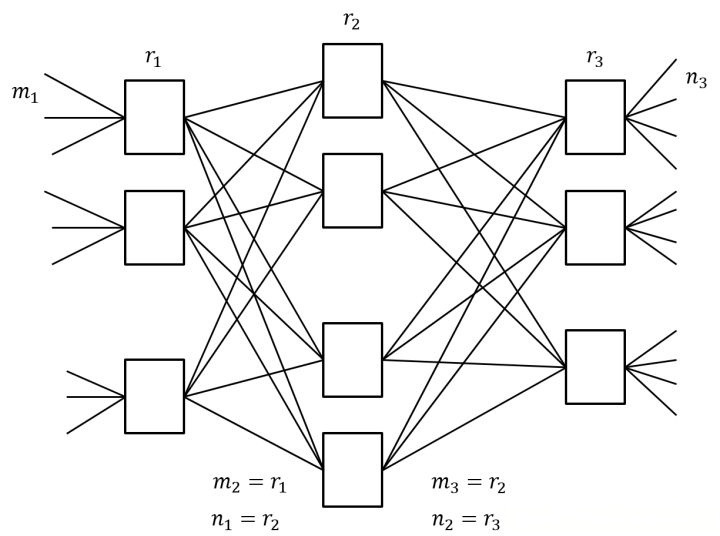

The CLOS network model, proposed by Bell Labs, is a non-blocking multi-stage interconnection architecture and serves as the theoretical foundation for Leaf-Spine architecture. It consists of input, middle, and output layers, with nodes in each layer fully interconnected. By increasing the number of middle-layer nodes, the model achieves linear scalability in network capacity, fundamentally resolving the bandwidth bottlenecks inherent in traditional architectures.

The Leaf-Spine architecture is a simplified two-layer engineering implementation of the CLOS model, where Leaf switches correspond to the input/output layers and Spine switches represent the middle layer. Compared to the theoretical CLOS model, Leaf-Spine emphasizes oversubscription ratio control and efficient port resource utilization to accommodate asymmetric traffic patterns in data centers. The non-blocking nature of the CLOS network provides the core theoretical support for Leaf-Spine's elastic scalability, enabling it to meet the dynamic expansion demands of data center services.

1.3 Core Advantages of Leaf-Spine Architecture

The core advantages of Leaf-Spine architecture stem from its flattened topology and CLOS model-enabled technology. Its primary advantage lies in low latency and high bandwidth. The full-mesh design ensures traffic between endpoints traverses at most two hops (Leaf→Spine→Leaf), drastically reducing transmission delays. Meanwhile, flexible bandwidth allocation between Leaf and Spine switches avoids the aggregation-layer bottlenecks of traditional architectures.

Second is exceptional scalability: Adding new Leaf switches only requires establishing links to all Spine switches without modifying existing topology. Port density upgrades directly expand terminal access capacity.

Finally, it offers simplified operations and fault isolation. The flattened structure reduces network hierarchy, easing configuration and troubleshooting. Failures of individual Leaf/Spine devices only affect localized endpoints without causing network-wide outages. Additionally, strategic oversubscription ratio planning balances resource utilization and performance, adapting to diverse data center requirements.

2. Common Data Center Network Architectures

2.1 Fat-Tree Structure

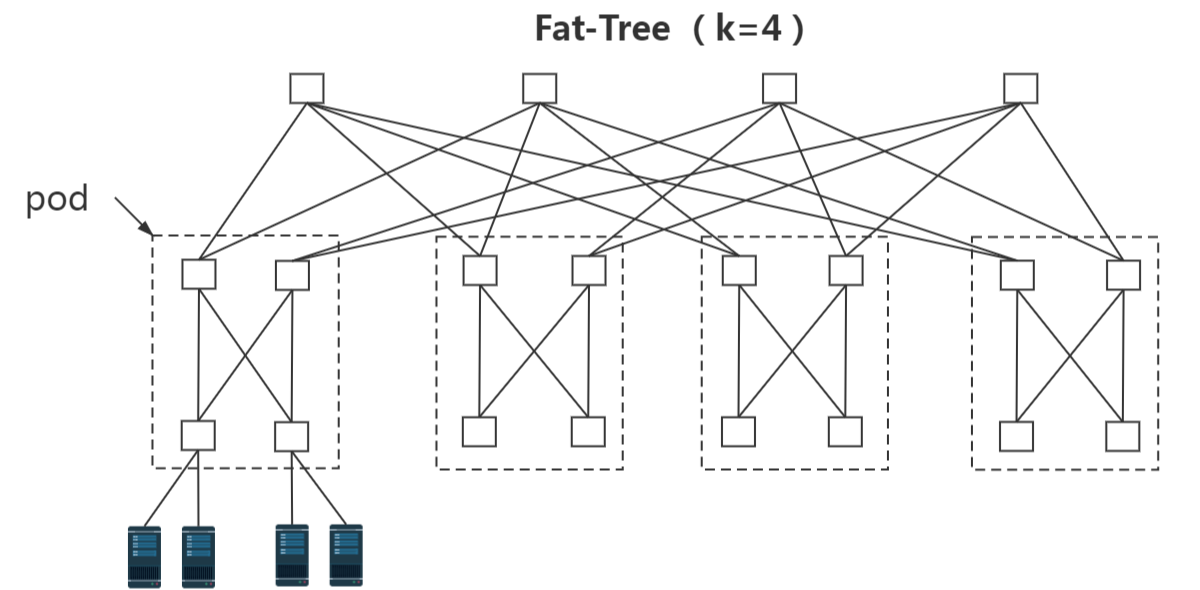

Fat-Tree is a multi-layer scalable architecture based on the Clos model, typically employing three or four tiers (access, aggregation, and core layers, with optional intermediate layers for large deployments). Its core feature is "tiered bandwidth scaling" – link bandwidth grows exponentially from access to core layers to ensure non-blocking transmission.

Port density on access layer switches directly determines terminal access scale, while link configurations in the aggregation and core layers impact overall oversubscription ratios. In large-scale data center scenarios, Fat-Tree achieves elastic scalability by adding layers and nodes. However, its multi-tier design results in higher topological complexity compared to Leaf-Spine architectures. Compared to flat Layer 2 solutions, Fat-Tree better suits hyper-scale heterogeneous data centers with complex traffic patterns.

2.2 Comparison of Advantages and Disadvantages

The fundamental distinction between Leaf-Spine and Fat-Tree architectures lies in their topological hierarchy.

Leaf-Spine excels in:

-

Ultra-low latency: Fixed two-hop forwarding (Leaf→Spine→Leaf) minimizes transmission delays.

-

Operational simplicity: Adding Leaf nodes only requires full-mesh connections to Spine layer, enabling seamless scaling without service disruption.

-

Cost efficiency: Lower hardware expenditure and simplified cabling suit mid/small-scale data centers and cloud-native environments.

Limitation: Restricted oversubscription adjustment may cause bandwidth bottlenecks under extreme traffic patterns.

Fat-Tree specializes in:

-

Bandwidth elasticity: Multi-tier bandwidth scaling achieves near-zero oversubscription or non-blocking transmission for hyperscale core services.

-

Traffic adaptability: Multi-path forwarding supports dynamic load balancing and QoS policies.

Drawbacks: Complex topology, high hardware costs, and significant configuration coordination challenges between tiers, requiring substantially more operational resources than the Leaf-Spine architecture.

2.3 Differences in Network Traffic Management

The flat topology of Leaf-Spine architecture enables simpler and more efficient traffic management. Traffic forwarding paths between endpoints are fixed at two hops, eliminating complex routing calculations and path optimization strategies. The oversubscription ratio serves as the core traffic control parameter. By matching link bandwidths between Leaf and Spine layers, the convergence ratio from the access layer to the core layer can be precisely controlled to prevent congestion. This architecture favors static traffic scheduling, making it suitable for symmetric traffic scenarios like virtual machine migration and distributed storage.

The multi-tier topology of the Fat-Tree structure requires dynamic adaptability in traffic management. Multiple forwarding paths provide redundant options for traffic scheduling, enabling real-time path optimization and load balancing based on traffic load. It also supports differentiated Quality of Service (QoS) policy deployment. However, its traffic management relies on complex routing protocols and monitoring systems, necessitating real-time monitoring of link utilization across all layers. Failure to do so may lead to localized congestion due to bandwidth mismatches between layers. This architecture is better suited for asymmetric, highly fluctuating mixed traffic scenarios.

English

English