Driven by the explosive growth of artificial intelligence (AI), cloud computing, 5G, and emerging immersive applications, data centers are entering an era where network bandwidth has become as critical as compute itself. Optical modules, responsible for carrying the majority of intra–data center traffic, have become a foundational building block of modern digital infrastructure.

As AI model training and inference scale to thousands of GPUs, traditional network architectures are being pushed to their limits. This article provides a strategic and technology-focused roadmap for the evolution of optical modules from 400G to 800G, 1.6T, and ultimately 3.2T, helping data center operators make informed, future-ready upgrade decisions.

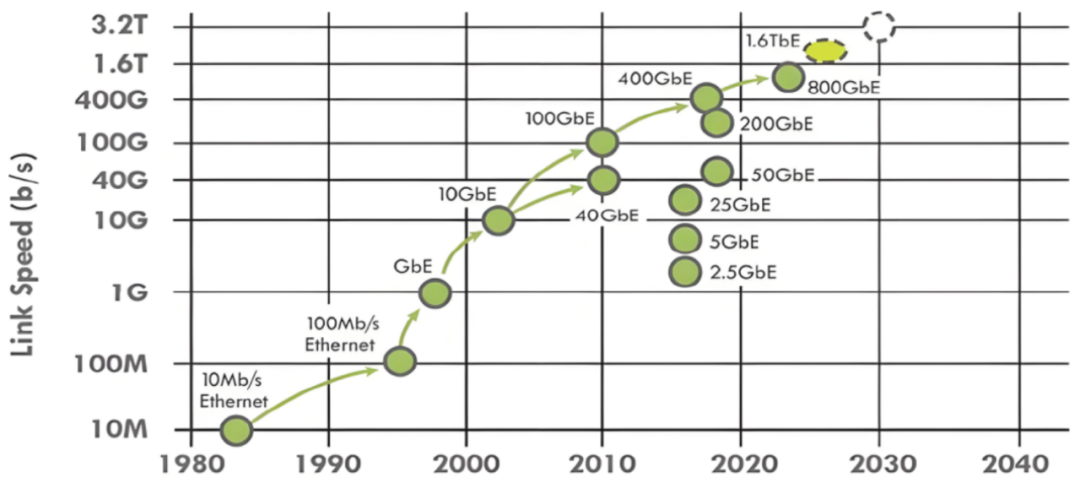

Figure 1: A historical timeline charting Ethernet link speed evolution from 10Mb/s to a projected 3.2T

Why Optical Modules Matter More Than Ever

Optical modules are the physical interface that interconnects switches, routers, and servers within a data center. Their strategic importance is reflected in three key dimensions.

First, optical modules are the primary enablers for overcoming bandwidth bottlenecks. While CPU and GPU performance continues to scale rapidly, insufficient network throughput can quickly become the limiting factor for distributed workloads. The achievable performance of AI clusters and cloud platforms is increasingly determined by interconnect bandwidth rather than raw compute power.

Second, optical modules directly influence power efficiency and port density. Hyperscale data centers deploy tens or even hundreds of thousands of optical modules. Small differences in module power consumption and form factor can translate into significant impacts on total cost of ownership (TCO), rack density, and cooling requirements.

Finally, optical module choices affect network reliability and operational flexibility. The trade-off between mature, hot-pluggable optics and emerging technologies such as Co-Packaged Optics (CPO) has become a long-term architectural decision rather than a purely component-level choice.

In practice, the continuous evolution of optical modules is a prerequisite for data centers transitioning from the 400G era toward 800G, 1.6T, and beyond to support next-generation AI and HPC workloads.

Speed Roadmap: From 400G to 3.2T

Optical module development has converged on a de facto "speed-doubling" roadmap, with each new generation arriving approximately every two to three years. This cadence is largely dictated by switch ASIC SerDes evolution, power density limits, and ecosystem maturity.

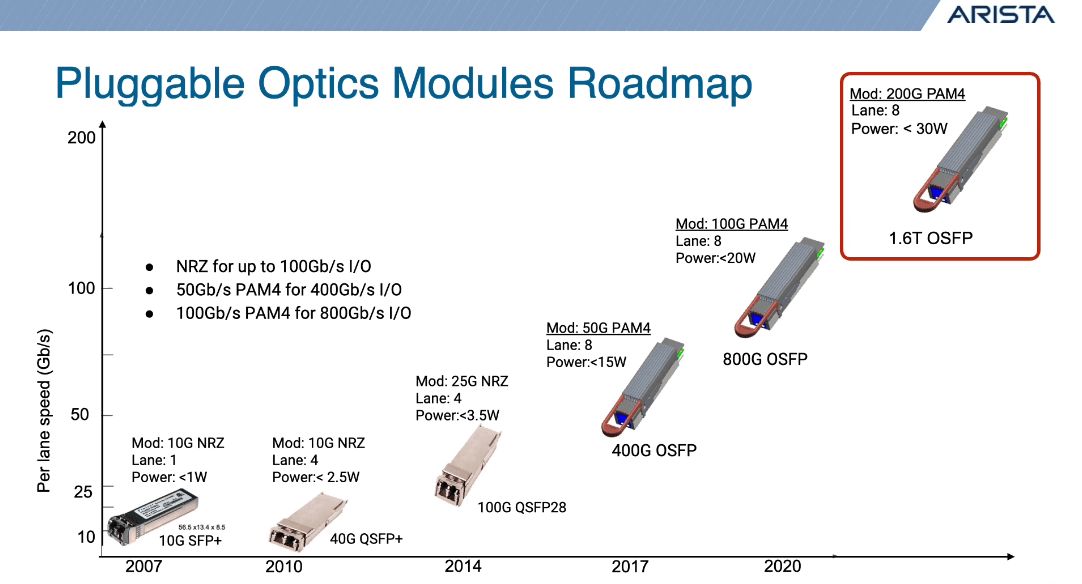

Figure 2: Roadmap showing the evolution of pluggable optics from 10G SFP+ to 1.6T OSFP, highlighting the increase in per-lane speeds and the transition to PAM4 modulation (Source: Arista)

- 400G: The Current Backbone

400G optical modules remain the cornerstone of today's hyperscale data centers. They are widely deployed in spine–leaf architectures and represent the most cost-effective high-speed solution for large-scale cloud networks.

Most 400G deployments use the QSFP-DD form factor and are typically implemented with four 100G optical lanes (DR4 or FR4), supported by eight 50G PAM4 electrical lanes on the host side. Thanks to a mature supply chain and broad interoperability, 400G continues to dominate large-volume deployments.

- 800G: The Bandwidth Engine of the AI Era

800G has entered large-scale commercialization since 2023 and is now primarily deployed in AI training clusters and HPC environments. These networks interconnect large GPU clusters over high-speed Ethernet or InfiniBand fabrics, where east–west traffic far exceeds traditional cloud workloads.

Most 800G modules adopt an 8×100G architecture and are predominantly delivered in OSFP form factors due to higher power budgets. While QSFP-DD 800G variants exist, they are generally constrained by thermal and power limitations and are used selectively.

For AI-driven data centers, 800G is not a future option—it is rapidly becoming a present-day necessity.

- 1.6T: The Next Logical Step

1.6T optical modules are expected to enter early commercial deployment around 2025–2026. As the natural successor to 800G, 1.6T aims to further increase bandwidth density without proportionally increasing power consumption or physical footprint.

Emerging form factors such as OSFP-XD and OSFP-HS are being designed specifically to accommodate the higher thermal and electrical demands of 1.6T. The primary technical challenge lies in balancing higher SerDes rates, advanced DSP complexity, and manageable module power dissipation.

- 3.2T: Pushing the Physical Limits

3.2T represents the frontier of optical interconnect technology and is generally expected after 2027. At this scale, traditional pluggable optics face fundamental challenges related to electrical signal integrity, power consumption, and heat dissipation.

Future 3.2T implementations are expected to rely on 224G SerDes, using architectures such as 16×200G or potentially 8×400G lanes. At this point, Co-Packaged Optics is widely regarded as a necessary architectural shift rather than an optional enhancement.

Core Enabling Technologies Behind Higher Speeds

The evolution from 400G to 3.2T is driven not by speed alone, but by coordinated advances across multiple technologies.

PAM4 modulation has replaced NRZ as the standard for high-speed optical transmission, effectively doubling data throughput without increasing symbol rates. However, this comes at the cost of reduced signal margins, making advanced DSP indispensable.

High-performance DSPs serve as the "brains" of modern optical modules, performing equalization, error correction, and signal compensation. As speeds increase, DSP complexity and power efficiency become critical differentiators.

On the photonics side, Indium Phosphide (InP) remains a high-performance solution, particularly for long-reach applications, but faces challenges in cost and large-scale integration. Silicon Photonics (SiPh), leveraging mature CMOS manufacturing, has emerged as a key enabler for 800G and beyond by offering higher integration, lower cost, and scalable production.

Finally, SerDes evolution—from 56G to 112G and now toward 224G—forms the electrical foundation upon which each new optical generation is built.

Co-Packaged Optics (CPO) vs. Pluggable Optics

As bandwidth scales, the industry is increasingly evaluating Co-Packaged Optics as an alternative to traditional pluggable modules.

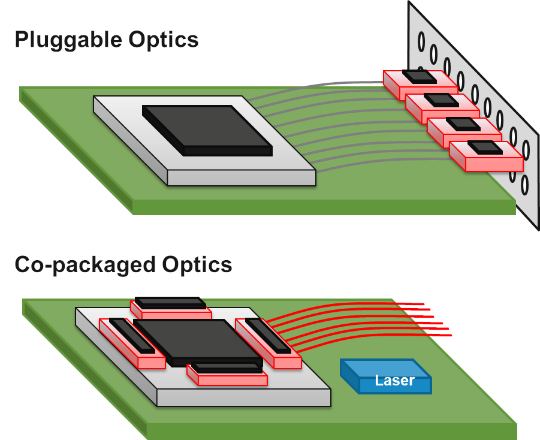

Figure 3: This diagram compares pluggable optics with co-packaged optics, showing how optics move closer to the switch chip. Co-packaged optics reduce electrical path length and improve signal efficiency.

CPO significantly reduces electrical path lengths by integrating optical engines directly with switch ASICs, resulting in superior signal integrity and substantially lower energy per bit. However, this comes at the cost of reduced flexibility, higher system-level integration complexity, and new challenges in serviceability and supply chain management.

Pluggable optics, by contrast, remain highly flexible, hot-swappable, and supported by a mature ecosystem. As a result, they are expected to remain the dominant solution for the next five to seven years, even as CPO gains traction in ultra-high-bandwidth scenarios.

A Practical Upgrade Roadmap for Data Centers

Data center upgrades should be guided by workload characteristics rather than peak bandwidth alone.

For traditional cloud and enterprise workloads, 400G continues to offer the best balance of cost, performance, and maturity. AI, ML, and HPC-focused operators should prioritize 800G deployments for GPU cluster interconnection and high-speed backbones.

Infrastructure readiness is equally critical. Single-mode fiber is a prerequisite for future-proof deployments, while rack-level power delivery and cooling must be evaluated carefully as module power increases. New generations of switches supporting 112G and 224G SerDes are essential enablers for higher speeds.

A phased upgrade strategy is often the most economical approach. Many operators will deploy 800G at the core while pushing 400G toward the access layer, gradually introducing 1.6T for next-generation AI clusters as ecosystems mature.

Conclusion

The transition from 400G to 3.2T optical modules is not simply a race for higher speeds—it represents a fundamental shift in how data center networks are designed, powered, and scaled. Higher bandwidth density, improved energy efficiency, and tighter integration will define the next decade of data center infrastructure. For operators and vendors alike, understanding this evolution and planning accordingly is no longer optional. It is a strategic imperative in an AI-driven digital economy.

English

English