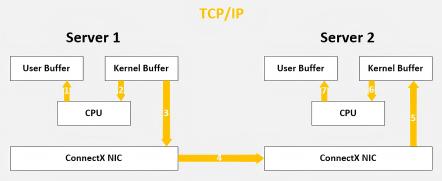

Facing high-performance computing, big data analytics, and low-latency applications, the existing TCP/IP software/hardware architecture and its high CPU consumption characteristics can no longer meet these demands. This is primarily reflected in excessive processing delays (up to tens of microseconds), along with additional latency caused by multiple memory copies, interrupt handling, context switching, complex TCP/IP protocol processing, excessive network delays, store-and-forward mechanisms, and packet loss.

Whether in traditional HPC applications or emerging deep learning frameworks, RDMA communication has become a critical component. This technology, based on remote direct memory access, significantly improves the speed and efficiency of data exchange. Especially with the rise of cloud computing and deep learning, RDMA has been widely adopted in Ethernet environments. Below, we will explore the technical definition, core principles, and key advantages of RDMA in detail. By the end, you will understand why RDMA offers a superior solution to the aforementioned challenges.

I.What is RDMA?

Traditional TCP/IP technology requires data packets to pass through the operating system and other software layers during processing, consuming substantial server resources and memory bandwidth. Data must be repeatedly copied and moved between system memory, processor caches, and network controller buffers, placing a heavy burden on the CPU and memory. The severe "mismatch" between network bandwidth, processor speed, and memory bandwidth further exacerbates network latency effects.

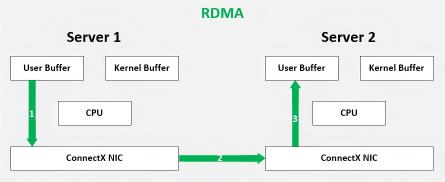

RDMA (Remote Direct Memory Access) enables one computer to directly access another computer's memory without time-consuming processor involvement. It allows data to be rapidly transferred from one system to a remote system's memory without impacting the operating system. The diagram below illustrates RDMA's principles and its comparison with TCP/IP architecture.

RDMA can be simply understood as leveraging relevant hardware and networking technology, Server 1's NIC can directly read/write Server 2's memory, achieving high bandwidth, ultra-low latency, and minimal resource overhead. Applications are not involved in data transfer—they only need to specify memory read/write addresses, initiate the transfer, and wait for it to complete.

II.Which Network Protocols Support RDMA?

2.1 InfiniBand

InfiniBand is a native RDMA architecture designed for HPC (High-Performance Computing), featuring lossless low-latency networking with RDMA as its core function. It defines the link layer, transport layer, and management layer for tightly coupled clusters. With its ultra-low latency, high bandwidth, and mature tooling, InfiniBand has long dominated HPC field and remains unmatched in high-speed, large-scale cluster applications. However, as a standalone network technology, it requires dedicated NICs and switches.

2.2 RoCE

RoCE (RDMA over Converged Ethernet) encapsulates RDMA traffic over UDP/IPv4 or UDP/IPv6, enabling L3 network routing. It relies on data center controls (e.g., PFC for flow control, ECN for congestion management) to maintain low latency by mitigating packet loss and congestion.

2.3 iWARP

iWARP (Internet Wide Area RDMA Protocol) implements RDMA over TCP. It implements RDMA function through a reliable TCP transport protocol stack (based on IETF standards: RDMAP, DDP, MPA/TCP/SCTP). Due to TCP's reliability and connection-oriented nature, iWARP proves more attractive in packet-loss-prone or wide-area network (WAN) environments, though its performance differs from UDP-based RoCEv2 and native InfiniBand.

III.Advantages of RDMA

At its core, RDMA is a "smart NIC with optimized software architecture" technology for high-speed remote memory access. It achieves high-performance remote data transfer through two key approaches:

3.1 Zero-Copy

Zero-copy networking enables the NIC to transfer data directly, eliminating data copying step between application memory and kernel space, drastically reducing transmission latency.

3.2 Kernel Bypass

Kernel protocol stack bypass technology allows applications to send commands directly to NICs without kernel involvement. RDMA requests move from user space to local NIC and across the network to remote NIC, minimizing context switches between kernel memory and user spaces.

3.3 CPU Resource Offload

Remote memory access consumes zero CPU resources on the target machine. No remote process/CPU intervention is needed, and remote CPU caches remain uncontaminated.

3.4 Message-Based Transactions

Data is processed as discrete messages (not streams), removing the need for applications to split "streams" into messages.

3.5 Scatter/Gather Support

RDMA supports local processing of multiple scatter/gather entries: it can read multiple memory buffers and consolidate them into a single stream, or receive a stream and write it to multiple memory buffers.

In specific remote memory read/write scenarios, the "remote virtual memory address" used for RDMA operations is transmitted within the RDMA message. Remote applications only need to register the corresponding memory buffer locally on the network card, requiring no additional operations afterward. Beyond initial steps like connection establishment and memory registration calls, the remote node's CPU does not need to provide service during the entire RDMA data transfer process, incurring no additional overhead.

IV.Types of RDMA Networks

4.1 InfiniBand Network

A complete InfiniBand network comprises: Subnet Manager (SM), IB NICs, IB switches and Dedicated cables/optical modules.

Key Features:

-

Centralized control plane: SM computes/distributes forwarding tables (no distributed routing).

-

SM roles: Partitioning, QoS configuration, etc.

-

Requires proprietary cabling and optical module systems.

4.2 RoCE Network

Key Features:

-

NICs: NVIDIA (ConnectX series), Intel, Broadcom. Single-port speeds starting at 50 Gbps. Commercial products now support up to 400 Gbps.

-

Switches: Cisco, HPE, Arista with Broadcom Tomahawk ASICs (TH3 mainstream, TH4 emerging).

-

Ethernet-native: Reuses standard cabling and optical module systems.

-

Cost-effective: Supports both RDMA and traditional Ethernet traffic.

Challenges:

-

Lossless Ethernet in large-scale clusters: Requires meticulous tuning of switch parameters like headroom, PFC, and ECN, resulting in higher engineering complexity.

-

Scalability: Slightly lower throughput than InfiniBand at scale. Additionally, within the RoCE ecosystem, NVIDIA ConnectX series network interface cards hold a significant market share.

V.InfiniBand vs. RoCE

InfiniBand: Excels in forwarding efficiency, fault recovery, scalability, and O&M simplicity for specialized HPC/AI workloads.

RoCE: A pragmatic choice for most intelligent computing, balancing performance and cost.

| Parameter |

InfiniBand |

RoCE |

| End-to-end delay |

2us |

5us |

| Flow Control Mechanism |

Credit-based flow control mechanism |

PFC/ECN, DCQCN |

| Forwarding Mode |

Forwarding based on Local ID |

IP-based Forwarding |

| Load Balancing Mode |

Packet-by-Packet Adaptive Routing |

ECMP Routing |

| Recovery |

Self-Healing Interconnect Enhancement for Intelligent Datacenters |

Route Convergence |

| Network Configuration |

Zero configuration through UFM |

Manual Configuration |

VI.Summary

In recent years, one of the core directions in data center network technology development has been to simplify network architecture design, accelerate deployment processes, and optimize operations and maintenance management. By adopting innovative solutions like unnumbered BGP, dependency on complex IP address planning can be effectively reduced, configuration errors minimized, and overall efficiency enhanced. Simultaneously, real-time fault detection tools such as WJH provide deep operational insights, significantly improving the speed of network issue identification and resolution.

Within this trend, AICPLIGHT, as a solution provider specializing in lossless RDMA networks, delivers both InfiniBand and RoCE-based lossless network solutions. Its core mission is to establish stable, lossless network environments for customers while providing robust high-performance computing support capabilities.

English

English