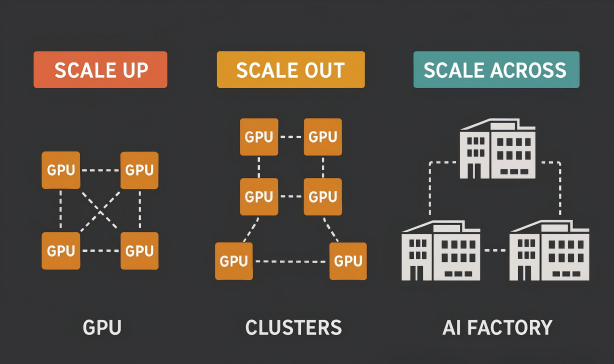

As AI model parameters surge from billions to trillions, computing power demands are growing exponentially. The traditional single data center model has reached its physical limits, driving the evolution of AI infrastructure through three key phases: Scale-Up, Scale-Out, and Scale-Across.

Together, these architectures define the core framework of modern AI computing. Throughout this journey, optical transceivers have been the silent enablers — the "hardware backbone" that powers high-speed interconnects and determines how far and how efficiently computing can scale.

Figure 1: Scale-Up, Scale-Out, and Scale-Across Differences

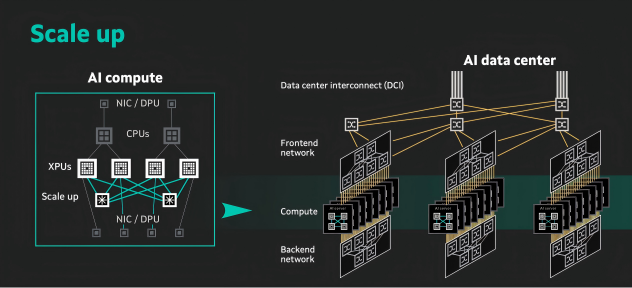

Scale-Up: Vertical Expansion for Extreme Single-Node Performance

Scale-Up enhances performance by vertically integrating hardware resources within a single node or rack, creating powerful supernodes. This approach combines more GPUs or accelerators within one enclosure, using high-speed interconnects like NVIDIA NVLink to enable direct chip-to-chip communication and highly synchronized processing.

Optical modules play a crucial role here. Short-reach optical transceivers, typically using multimode fiber over distances of less than one meter, replace copper connections to eliminate signal loss and latency. For example, NVIDIA GB200 NVL72 integrates 72 GPUs as a unified compute unit — a feat made possible by 800G short-reach optical modules, which deliver nanosecond-level latency and terabyte-scale bandwidth.

Yet, Scale-Up faces clear physical constraints. Power, cooling, and rack space form natural ceilings, while high-density integration poses thermal and cost challenges. Optimizing electro-optical conversion efficiency and power consumption will be critical to pushing this model further.

Figure 2: Scale up interconnect architecture (Source: Marvell)

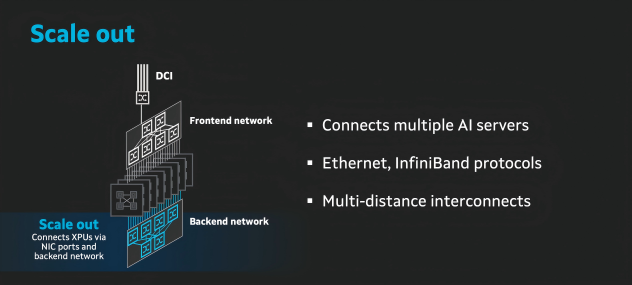

Scale-Out: Horizontal Expansion through Clustered Architecture

When single-node performance plateaus, Scale-Out takes over — linking multiple servers or racks into a massive compute cluster through high-speed networking. In this model, optical transceivers shift from a supporting role to the core data backbone, directly influencing scalability, efficiency, and fault tolerance.

Within a single data center, Scale-Out typically relies on InfiniBand or high-performance Ethernet networks. Here, 800G optical transceivers are the cornerstone:

- InfiniBand NDR 800G OSFP modules achieve sub-2 μs latency and lossless data transfer through native RDMA, ideal for parallel GPU communication.

- Ethernet-based 800G OSFP 2xDR4 and 800G OSFP 2xSR4 modules use PAM4 modulation to enable hundreds-of-meters-long high-speed interconnects, minimizing communication overhead.

This architecture excels in scalability and reliability. Nodes can be added or replaced without disrupting the cluster — perfect for data-parallel workloads. However, data center-wide constraints like power capacity, cooling efficiency, and floor space become new bottlenecks. Large-scale deployment of 800G modules must balance bandwidth expansion with energy efficiency, while intelligent DSP-based modules help simplify network management and synchronization.

Figure 3: Scale out interconnect architecture (Source: Marvell)

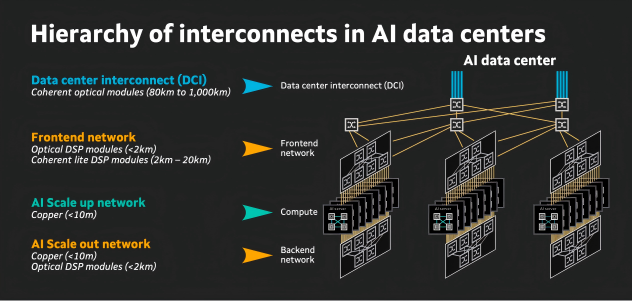

Scale-Across: Cross-Regional Expansion Beyond Physical Boundaries

When both vertical and horizontal scaling reach their limits, Scale-Across emerges — interconnecting geographically distributed data centers through ultra-high-speed optical networks. The result is a "global compute fabric", enabling computing power to be allocated and managed as flexibly as electricity.

As NVIDIA CEO Jensen Huang puts it:

"The AI factories of the future won't just be large — they'll connect cities, countries, and continents together."

To realize this vision, long-reach, low-latency, high-capacity optical transceivers are essential. They serve as the arteries of inter-data-center communication, defining the upper limits of distributed AI performance.

Key enabling technologies include:

- Spectrum-XGS Ethernet + 1.6T coherent optical modules, featuring adaptive congestion control that optimizes transmission across distances from tens to hundreds of kilometers.

- Hollow-core fiber + coherent optics, reducing latency by up to 30% and signal loss by more than 50% compared with glass fiber — ideal for long-haul DCI (Data Center Interconnect) applications.

- High-speed coherent modules supporting 800G–1.6T per wavelength across hundreds of kilometers, providing the backbone for global-scale compute collaboration.

-

Optical Circuit Switching (OCS) that dynamically reconfigures light paths without electrical conversion, minimizing delay and power consumption in high-traffic networks.

Figure 4: Hierarchy of interconnects in AI data centers (Source: Marvell)

Industry Impact and Future Outlook

The rise of Scale-Across is reshaping the global data center ecosystem — and the optical module industry stands at the heart of this transformation.

Accelerated optical communication innovation: The demand for higher bandwidth and lower latency will speed up commercialization of 800G/1.6T/3.2T coherent modules, hollow-core fibers, and OCS technologies. Meanwhile, Co-Packaged Optics (CPO) — integrating transceivers directly with switch chips — will further reduce latency and power while overcoming bandwidth bottlenecks.

Computing and networking convergence: Future "AI factories" will be distributed globally based on factors like energy cost, land availability, and cooling conditions. Optical transceivers will be the key enabler of compute orchestration — with 800G short-reach modules for intra-data center interconnects and 1.6T coherent modules for cross-regional computing links.

Three-tier convergence for intelligent computing networks: Tomorrow's AI infrastructure will seamlessly integrate Scale-Up + Scale-Out + Scale-Across architectures. Short-reach optics will power high-performance nodes, 800G modules will connect data center clusters, and 1.6T–3.2T coherent optics will drive global compute synchronization — creating a unified, intelligent computing network.

Conclusion

From Scale-Up to Scale-Out to Scale-Across, the evolution of AI computing is a relentless quest to transcend physical limits and achieve higher collaborative efficiency — and optical modules have been the constant enabler throughout.

- In Scale-Up, short-reach optics remove single-node bottlenecks.

- In Scale-Out, 800G modules build the backbone of data center networks.

- In Scale-Across, 1.6T/3.2T coherent modules power global-scale computing collaboration.

As optical communication continues to advance, transceivers will evolve toward higher bandwidth, lower latency, and greater energy efficiency — forming the foundation of next-generation AI applications such as physical AI and precision medicine. Ultimately, optical technology will redefine how humanity builds and uses computing power, fueling the next stage of digital economic growth.

English

English